It took direct aim at Nvidia earlier this spring by touting an improved version of the AMD/Xilinx FPGA-based inferencing card (VCK5000) as having a significantly better TCO for inferencing than most of Nvidia’s lineup.

There are, of course, many other AI chip newcomers whose offerings are designed to speed up training and inferencing. Graphcore, which did participate in the last MLPerf training exercise and says its technology is good for both training and inferencing, did not participate in this exercise. IBM has made similar claims for Power10, but hasn’t participated in MLPerf. Intel has recently been touting its newer CPU’s enhanced inference capability. Intel, which participated in the Closed Inference Division (apples-to-apples) in the last round, didn’t do so this time instead, it opted for the Open Division, which allows greater flexibility of system components and hardware and isn’t deemed an apples-to-apples comparison.

Krai worked with Qualcomm and had Qualcomm Cloud AI 100-based entries in the datacenter and edge categories. FuriosaAI entered results from a Supermicro system using the FuriosaAI Warboy chip in the Closed Edge category. NEUCHIPS‘ FPGA-based RecAccel was used on DLRM (Deep Learning Recommender Models). There were a few other accelerators in the mix across the three benchmark suites. … So really substantial performance difference, if you sort of put that in the context of how many servers would it take to get to equivalent performance, that really sort of cuts into their per-watt advantage.” With that said, we outperform them on both workloads and, in the case of SSD-Large, by a factor of about three or four. “There are a couple of places on the CNN-type networks where frankly, Qualcomm has delivered a pretty good solution as it relates to efficiency. Its Qualcomm Cloud AI 100 accelerator is intended not only to be performant, but also power-efficient, and that strength was on display in the latest round.ĭuring Nvidia’s press/analyst pre-briefing, David Salvator (senior product manager, AI Inference and Cloud) acknowledged Qualcomm’s strong power showing. It turned in solid performances, particularly on edge applications where it dominated. Qualcomm is a MLPerf rarity at the moment in terms of its willingness to vie with Nvidia. I hope I’m wrong, as I think there is tremendous value in both comparative benchmarks and - even more importantly - in the improvements in software.” I also fear that the next iteration is more likely to see two vendors dwindle to one than increase to three. That’s a lot of work, and only Nvidia and Qualcomm are making the investments.

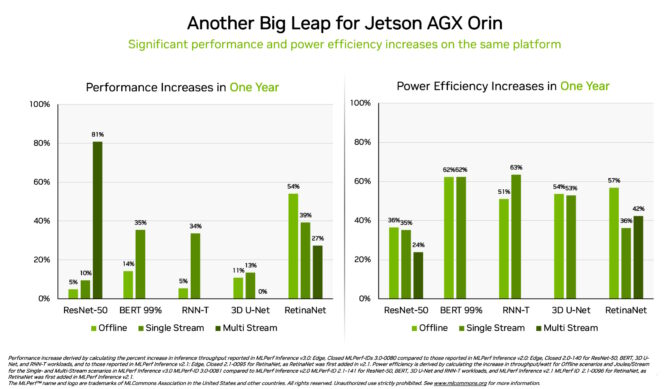

I count some 5,000 results in the spreadsheet. Karl Freund, founder and principal at Cambrian AI Research, said: “My take is that most companies don’t see enough ROI from the massive effort required to run these benchmarks. The question that’s dogged MLPerf is: “where’s everyone else?” Given the proliferation of AI chip start-ups with offerings and rumblings by CPU makers - notably Intel and IBM - that their newer CPUs have enhanced inference capabilities, one would expect more participation from a growing number of accelerator/system providers. To a large extent, MLPerf remains mostly a showcase for systems based on Nvidia’s portfolio of accelerators. MLPerf divides the exercises into Divisions and Categories to make cross-system comparisons fairer and easier, as shown in the slide below. The latest inference round had three distinct benchmarks: Inference v2.0 (datacenter and edge) Mobile v2.0 (mobile phones) and Tiny v0.7 (IoT). Of the two, model training is more compute-intensive and tends to fall into the HPC bailiwick inferencing is less so, but still taxing. The MLPerf benchmark comes around four times a year, with inferencing results reported in Q1 and Q3 and training results reported in Q2 and Q4. I’m especially excited to see greater adoption of power and energy measurements, highlighting the industry’s focus on efficient AI.” Bottom line: AI systems are steadily improving.ĭavid Kanter, executive director of MLCommons, the parent organization for MLPerf, said: “This was an outstanding effort by the ML community, with so many new participants and the tremendous increase in the number and diversity of submissions. Nevertheless, overall performance and participation was up, with 19 organizations submitting twice as many results and six times as many power measurements in the main Inference 2.0 (datacenter and edge) exercise. Roughly four years old, MLPerf still struggles to attract wider participation from accelerator suppliers. MLCommons today released its latest MLPerf inferencing results, with another strong showing by Nvidia accelerators inside a diverse array of systems. Since 1987 - Covering the Fastest Computers in the World and the People Who Run Them

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed